Professional Portfolio Web Applications and Software I made for others

2006, 2007, 2008: Microsoft Imagine Cup

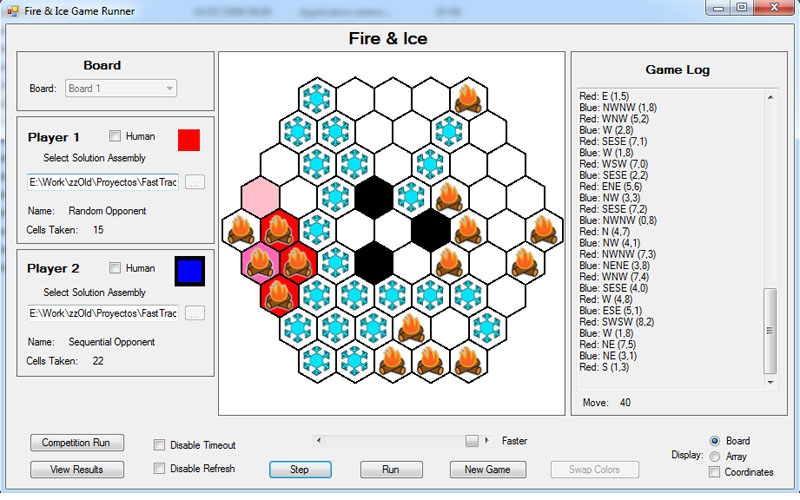

ASP.Net 2.0, .Net Windows and Console Applications 2008 Finals: Multiplayer competition.

2008 Finals: Multiplayer competition.Each contestant wrote an AI to conquer as much board territory as possible and they played against each other.

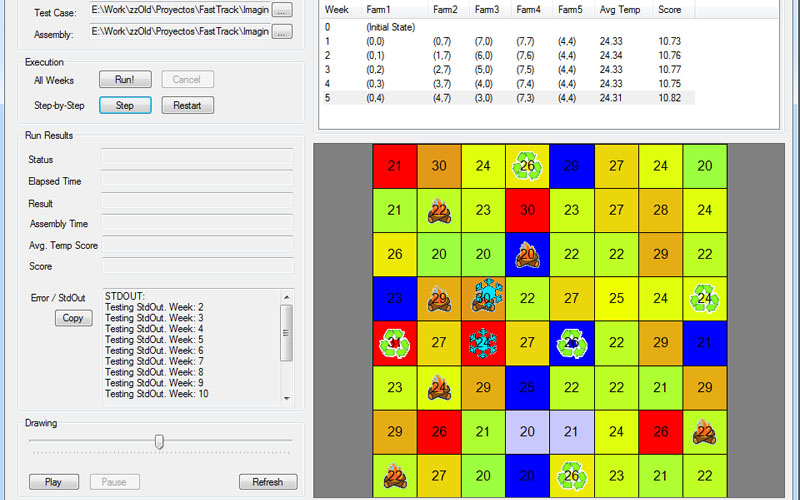

2008 Round 2: The competition theme for 2008 was the environment.

2008 Round 2: The competition theme for 2008 was the environment.The contestants needed to find the better way to cool a certain area by planning the movements of "cooling machines".

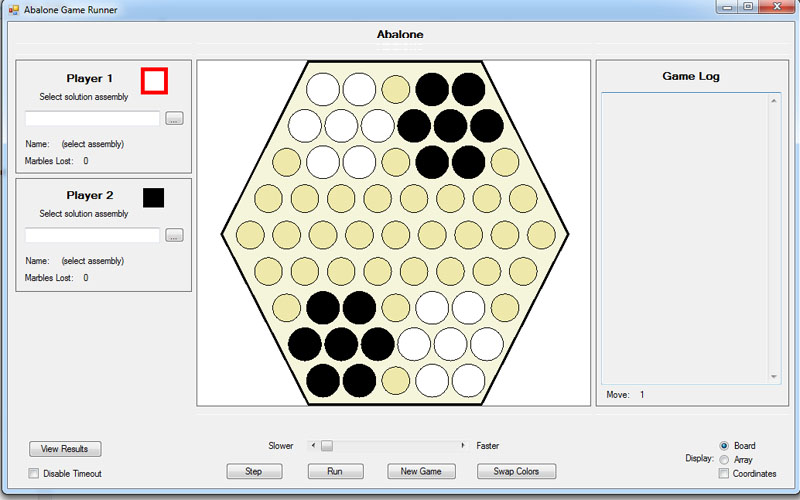

2007 Finals: Multiplayer competition.

2007 Finals: Multiplayer competition.The contestants AIs played the famouse board game Abalone against each other.

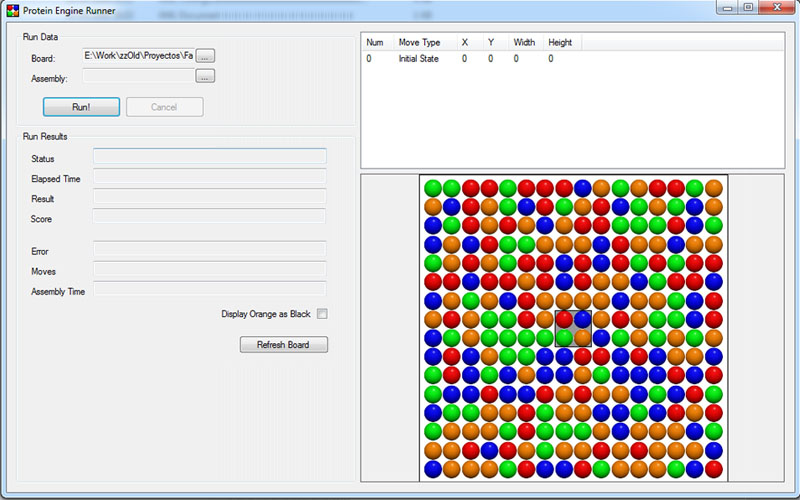

2006 Round 2: The theme was health.

2006 Round 2: The theme was health.Contestants had to find the best possible way to make movements in the board or marbles to meet an objective.

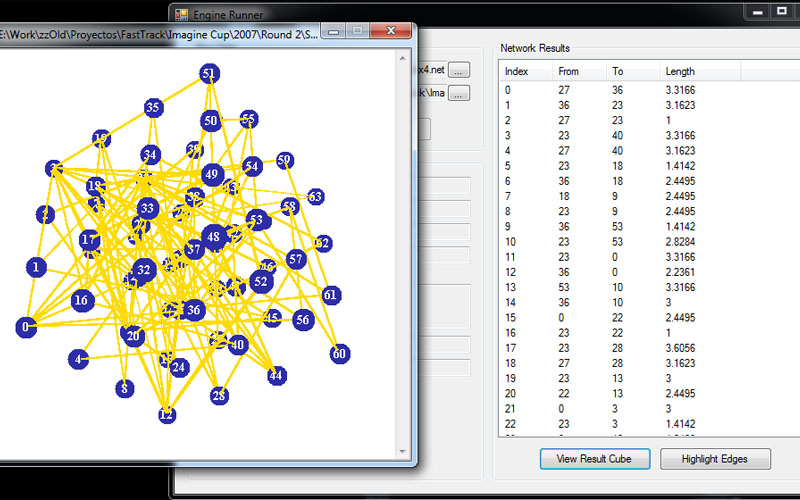

2007 Round 2: The theme was education.

2007 Round 2: The theme was education.Contestants had to find the best possible way to place "neurons" in a cube to minimize the length of their connections.

Summary

From 2006 to 2008, I had the honor of participating as a judge in the Algorithm invitational of Microsoft's Imagine Cup, during Round 2 and the finals. The judging itself took part mostly during the worldwide finals, a 24-hour straight competition where 6 of the brightest CS students in the world compete to optimize results for around 10 different NP-Complete problems, plus a final "multi-player" competition, where they write an AI to play a game against each other.

Besides the judging part, my main role was to write the code that'd allow participants to compete.

Competitions: Round 2

Round 2 was a 45 day long contest to select the best 6 competitors from the 200 best scores of Round 1. The competition revolved around a problem to solve that had hundreds of test cases. Competitors developed a solution to the problem and uploaded it to a website that would run their code, time it, and calculate their score. From a development standpoint, this meant doing:

- A relatively simple website to show a ranking of competitors, allowing them to upload their DLL files and request executions of it to be queued.

- A server dedicated to running the tests and nothing else, to keep times as stable as possible, that'd take the DLL files, test cases, run them, time them, and record the results.

- Sandboxing of the competitor's code to make sure they couldn't kill our servers, or cheat in any way. They were uploading a compiled DLL after all, and they could potentially do anything. I learned a lot about .Net's security model and remoting through this. There are very tricky cases here too, like not allowing the creation of multiple threads (considered cheating), and some specific exceptions, like Stack Overflows, that can't really be caught and handled.

- Accurate timing of the competitor's execution time, which implied forcing .Net to JIT their code before running, otherwise they were getting penalized with compilation times.

- An SDK for the competitors with sample code, test applications that would let them visualize what their code was doing, and test case generators so they could run their code against thousands of test cases to make sure it worked well.

Competitions: Finals

During the finals, the competitors had 24 hours to solve 10 optimization problems and write an AI for a multi-player competition. The tools we provided them with were very different to what they had in Round 2. Almost everything was based on a command-line and had to run very fast. Each problem essentially included a sample solution, 100 test cases, and a command line program that'd run them all and show the results (PASS/FAIL and timing).

Given the little time there was to fix problems, the visibility of the event, and Microsoft's reputation involvement, this was by far the least room for error i've had in my life. It was definitely an interesting experience.

What the client said about my work

"I couldn't be happier with the work. Daniel is smart, productive, easy to work with, easy to contact, and very helpful. As it turned out, I was busy with another project at the time and didn't always have time to monitor Daniel's work closely. No problem, though... Daniel proved more than capable of producing excellent work that met and surpassed the desired business goals, without requiring any help or oversight. I have been involved with software development for 20+ years, and this is truly one of the most pleasant experiences I have had with a contractor."

Back to Professional Portfolio